Kamera:

The goal is to have a handheld system that streams and displays a 4k60fps captured video

For now I'm using:

I'm using a simple ubuntu server with gstreamer installed.

I tried displaying the stream without a display server but directly piping the video in the tty framebuffer had issues with color display.

So I'm using sway as it's lightweight and easily configurable.

In 4k60fps it transmits at 128Mbit/s so the Raspberry 5's wifi bandwidth should be enough.

It would allow me to have a completly wireless setup but it costs too much for now.

Transmission:

gst-launch-1.0 \

v4l2src device=/dev/video0 io-mode=2 ! "image/jpeg,width=3840,height=2160,framerate=60/1" ! jpegparse ! tee name=t \

t. ! queue max-size-buffers=2 leaky=downstream ! rtpjpegpay ! udpsink host="<receiver ip>" port=5000 \

t. ! queue max-size-buffers=2 leaky=downstream ! jpegdec ! autovideosink sync=false

Receiver:

gst-launch-1.0 udpsrc port="5000" caps='application/x-rtp, media=(string)video, clock-rate=(int)90000, encoding-name=(string)JPEG, a-framerate=(string)60.000000, x-dimensions=(string)"3840\,2160", payload=(int)26, ssrc=(uint)2460905729, timestamp-offset=(uint)952333192, seqnum-offset=(uint)78' ! rtpjpegdepay ! "image/jpeg,width=3840,height=2160,framerate=60/1" ! jpegdec ! queue ! autovideosink sync=false

And for now I'm failing at piping this in a virtual camera.

I tried with v4l2sink and pipewiresink but both are having issues.

I found that with pipewiresink, OBS is only accepting NV12 pixel format for now.

So I'm waiting on this pull request to add mjpeg and h264.

It will be way simpler as it won't need decoding on the gstreamer side.

And I found that with v4l2sink I should create the loopback device with a higher dedicated number as 10 for exemple.

sudo modprobe v4l2loopback max_buffers=4 video_nr=10

But it's still causing issues with negociation.

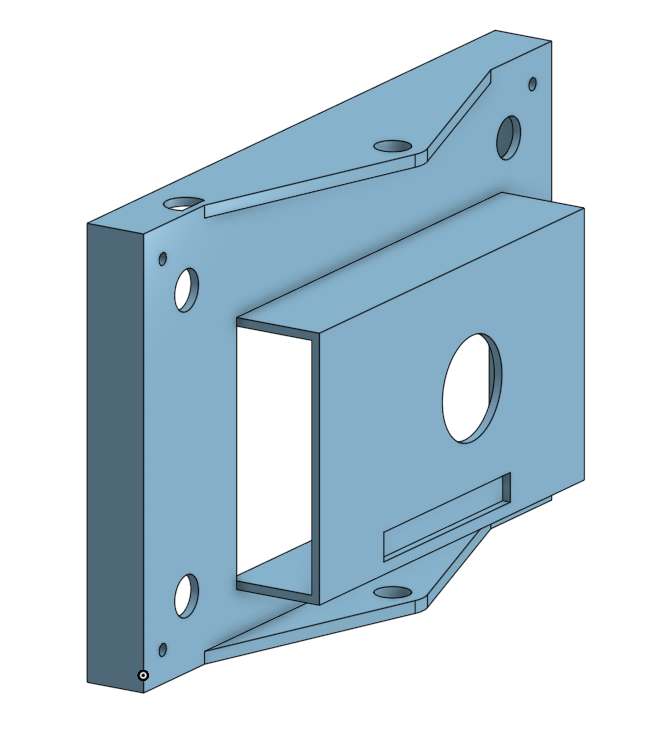

Here are some pictures of the case I made:

(Print pictures not up to date)